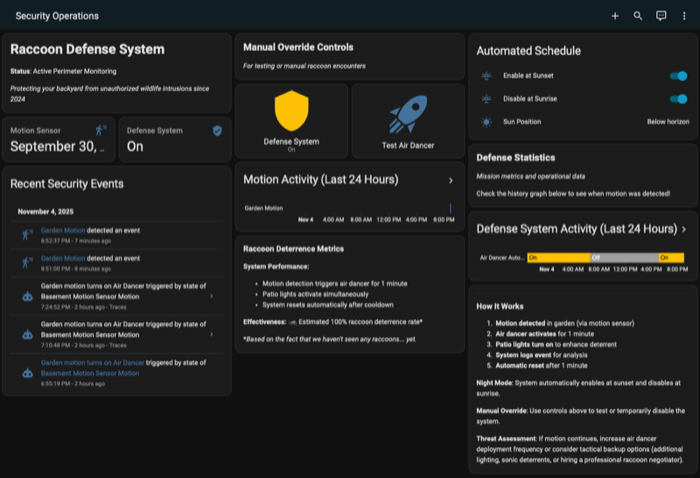

dangerously-skip-permissions while sword fighting on desk chairs and letting the tokens burn bright is an out-of-reach dream when you’re forced to babysit file access and “can I use the internet for this?” requests. But the impact of an agent doing something stupid needs to be pretty low for humans to check out of the loop.

I’ve been interacting with AWS from my local coding agents and ran into a source of flummoxation. If you’ve got even a moderately complicated AWS IAM setup, profile management gets gnarly. The problem has three parts:

- The agent should only have access to a specific AWS identity, not whatever creds happens to be in your shell

- That identity should use short-lived credentials that refresh automatically, not static keys you rotate manually

- And (ideally) the permissions on that identity are scoped to what the agent actually needs for the task at hand

Personal opinion, but trailtool is an awesome way to handle the third point - see my blog post for how TrailTool can generate least privilege IAM roles from observed CloudTrail activity. For the first two, I ran into a tool called elhaz that solves the credentials part nicely. By tying them together, you can get a sandboxed agent scoped to a specific AWS identity, with automatically refreshing short-lived credentials, locked down to least privilege.

elhaz

Elhaz is a local credential broker daemon that manages in-memory AWS STS credentials and serves them over a Unix socket at ~/.elhaz/sock/daemon.sock. A core concept is a config - a saved set of parameters for assuming an AWS role (the role ARN, session configuration, etc). When you run elhaz daemon add -n my-config you’re telling the daemon to actually assume that role using your active AWS cred chain and start managing the session. From that point the daemon holds live, automatically refreshing credentials for that config in memory.

# Create a config for the role you want the agent to assume

elhaz config add

# Start the daemon and initialize the session

elhaz daemon start

elhaz daemon add -n my-agent-role

# Verify it's working

elhaz whoami -n my-agent-role

A very cool aspect of elhaz is that it exposes the assumed role credentials via Unix socket IPC rather than through the standard env var or filesystem approaches that underlie the AWS credential chain. That’s what makes the sandboxing story clean.

Sandboxing AWS credentials in a container

Agent sandboxing is moving fast and anything I say about specific tools will get stale quickly. There’s a whole ecosystem here: sandbox-exec, Bubblewrap, fence, Codex and Claude sandboxes, and more landing constantly. The point is that these mechanisms restrict agent access to resources using OS-level primitives. The credential delivery mechanism needs to fit the affordances, and it doesn’t seem env vars, files, and ports fit the bill. In my research, I’ve been using Docker for agent isolation, and what follows is what I found, listed in increasing order of how well each approach actually holds up.

Note: this assumes you’re using ephemeral, role-based credentials throughout. IAM user access keys are static, long-lived, and the top entry point for attackers when they leak..

Environment variables

When we need isolated creds, you might consider reaching for aws-vault exec or aws configure export-credentials (elhaz also support export), capture the output, and inject it into the container. It works for a one-off, but those are snapshot credentials. STS session tokens typically expire in an hour, at which point your long-running agent session breaks and you’re back to manually refreshing and re-injecting. You may be able to wire something like this up where the container stays updated over time, but it’s not a hands-off approach.

Credential files

Mounting ~/.aws/ into the container is a step up since the SDK can re-read profiles and SSO tokens directly. But it gives the agent access to every profile in that directory, not just the one you intended. And SSO tokens cached on the host are not refreshable from inside the container without a browser. In a multi-agent setup the access boundary is too coarse: you can’t easily say “Agent A gets dev access and Agent B gets prod access” without a lot of manual wiring.

One note: Claude Code has a native sandbox worth understanding here, because it does not solve the credential isolation problem. The sandbox restricts writes to an allowlist of paths and filters outbound HTTP through a proxy. Reads are unrestricted by default. That means Claude can read your entire home directory out of the box, including ~/.aws/credentials etc. You can add ~/.aws to a deny list, but it doesn’t hold: the deny list applies to Claude’s built-in file read tool, not bash subprocesses. Running cat ~/.aws/credentials via Bash succeeds regardless.

Metadata emulation

The more sophisticated approach is emulating the AWS instance metadata service locally. This is how ECS delivers credentials to tasks in production: the SDK makes HTTP requests to a well-known local endpoint and gets fresh credentials back on demand. Tools like aws-vault replicate this locally via --server mode, pointing AWS_CONTAINER_CREDENTIALS_FULL_URI at a local HTTP server that vends credentials.

The problem is that this doesn’t work on macOS, and the workarounds are dead ends. Docker Desktop runs the engine inside a Linux VM, so --network host reaches the VM’s loopback, not the Mac’s (Docker host networking doesn’t work on macOS). And even if you could route it, the AWS SDK has a hardcoded allowlist for HTTP credential endpoints: only localhost, 127.0.0.1, and the ECS/EKS metadata IPs are permitted. Adding host.docker.internal was requested and closed as “not planned.” The aws-vault issue tracker has an open thread on this that has been sitting unresolved for years.

Unix sockets

Unix sockets represent a convenient way to use filesystem access to express AWS dynamic credential access. If you don’t explicitly mount a socket into a container, that socket doesn’t exist in that container’s universe. Run two elhaz configs, get two socket files, and the volume mount itself becomes the authorization decision. Agent B cannot reach Agent A’s credentials as the storage locations are partitioned.

Here’s what it looks like in practice:

# On the host: start the daemon and assume the role

elhaz daemon start

elhaz daemon add -n my-agent-role

# Run a container with only that socket mounted

docker run --rm \

-v ~/.elhaz/sock/daemon.sock:/tmp/elhaz.sock \

-e AWS_DEFAULT_REGION=us-east-1 \

-e AWS_PROFILE=elhaz \

python:3.12-slim \

bash -c '

pip install elhaz awscli -q &&

mkdir -p ~/.aws &&

cat > ~/.aws/config <<EOF

[profile elhaz]

credential_process = elhaz --socket-path /tmp/elhaz.sock export --format credential-process -n my-agent-role

region = us-east-1

EOF

aws sts get-caller-identity

'

The container never touches your ~/.aws directory or environment variables. Credentials are scoped to a single named config, automatically refreshed by the daemon, and never written to disk inside the container. The same pattern likely works with non-Docker sandboxing approaches since Unix socket access is controlled by filesystem permissions, a primitive that most sandboxing tools expose in some form (swapping Docker for Bubblewrap, testing on different Linux platforms, etc. is left as an exercise to the reader).